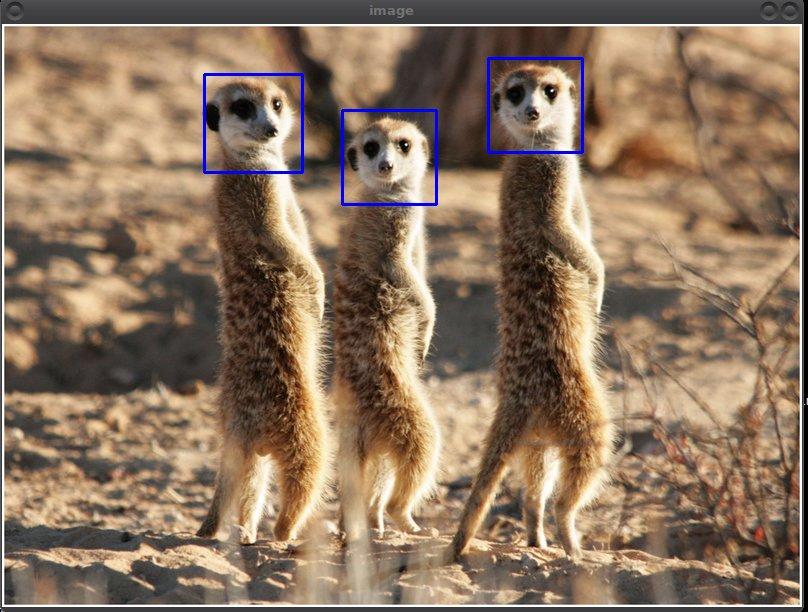

Imagine you wanted to make a classifier that could reliably identify meerkat faces. You would need a lot of images of typical meerkat faces. Unfortunately most of the photos of meerkats you find are like this.

Here’s another photo of some nice San Diego meerkats.

Those images are going to be difficult to use to train a machine learning classifier to recognize meerkat faces.

I wrote a little Python program which uses OpenCV to allow a curator to quickly go through a set of stock images and manually extract useful features into nicely sized (generally square as shown here) sub images. This makes it relatively easy to pull out just the cars, or just the faces, or just the signs, etc. It’s not automatic but it does reduce the donkey work to a minimum.

Here’s how it is used. If the previous images were m1.jpg and

m2.jpg, the program would be run like this.

roi_sq_selector.py -p meerkat m1.jpg m2.jpgEach of the input images is then presented in sequence. While the

image is visible you can click on it and it will draw a 64x64 box and

capture a sub image centered on the click. Or you can press down the

mouse button on the center of the image, drag to the edge of the

box you want, and release the mouse button. This box will then be

scaled to NxN (N=64 for this project) and saved in a unique numbered

file with a prefix of meerkat.

In about 11 seconds I converted the two photos I show above to these 7 standardized images ready for training.

I have used this technique on many different training projects. I have been able to quickly go through video frames and, with minimum tedium, extract 1000s of regularized images suitable for classifier training.

Here’s the code.

#!/usr/bin/python # roi_sq_selector.py # Chris X Edwards - 2017-04-30 # # usage: roi_sq_selector.py [-h] [-p PREFIX] file [file ...] # # positional arguments: # file One or more filenames. # # optional arguments: # -h, --help show this help message and exit # -p PREFIX, --prefix PREFIX # Output filename prefix # # * Press "c" to change to the next source image. # * Press "r" to reset boxes drawn on image. # # Interactive way to clip out square regions of interest from images. # Select the center of the ROI first. Then select one corner of the # square box which should evenly contain it. If the box is not # N it is resized to be N. # # Modified from an idea by Adrian Rosebrock. # http://www.pyimagesearch.com/2015/03/09/capturing-mouse-click-events-with-python-and-opencv/ import argparse import cv2 N= 64 # Minimum box size allowed. iN= 0 # Output image number. C= None # Center point (x,y) P= None # Corner point (x,y) cropping= False # In box selection mode awaiting 2nd point? def box_from_input(c,p): # Box coords from center (c) and corner point (p). cx,cy= c ; px,py= p d= max(abs(cx-px),abs(cy-py)) if d < N//2: d= N//2 return (cx-d,cy-d),(cx+d,cy+d) def click_and_crop(event,x,y,flags,param): global C,P, cropping, iN, A if event == cv2.EVENT_LBUTTONDOWN: # Looking for center. C= (x,y) cropping= True elif event == cv2.EVENT_LBUTTONUP: # Looking for corner. iN+= 1 P= (x,y) cropping= False b1,b2= box_from_input(C,P) cv2.rectangle(image, b1, b2, (255,0,0),2) cv2.imshow("image",image) roi= clone[b1[1]:b2[1],b1[0]:b2[0]] roi= cv2.resize(roi,(64,64)) ofn='%s%03d.png'%(A.prefix,iN) cv2.imwrite(ofn,roi) print("Saved image section: %s"%ofn) # Something like this to make live drag boxes. #elif event == cv2.EVENT_MOUSEMOVE and cropping: # sel_rect_p2= [(x, y)] # Another global. ap= argparse.ArgumentParser() ap.add_argument('-p','--prefix',required=False,help='Output filename prefix',default="roi") ap.add_argument('images', metavar='file', type=str, nargs='+', help='One or more filenames.') A= ap.parse_args() for ifn in A.images: image= cv2.imread(ifn) clone= image.copy() cv2.namedWindow('image') cv2.setMouseCallback("image",click_and_crop) while True: cv2.imshow('image',image) key= cv2.waitKey(100) & 0xFF if key == ord('r'): # Reset if "r" is pressed. image= clone.copy() elif key == ord('c'): # Finish if "c" is pressed. break cv2.destroyAllWindows()